Table of Contents

Introduction

In today’s data-driven world, businesses rely on database virtualization tools to streamline data access and management. These tools enable organizations to integrate and query data from multiple sources without duplicating it. As a result, companies can achieve greater agility, reduce storage costs, and enhance performance. With the growing demand for real-time insights, data virtualization is becoming a crucial part of modern IT infrastructure.

Moreover, data virtualization simplifies complex data environments by providing a unified access layer. This approach not only improves scalability but also ensures seamless integration with cloud and on-premises systems. By eliminating traditional data silos, businesses can make faster, data-driven decisions. As technology evolves, selecting the right tool is essential for maximizing efficiency and security.

What Are Database Virtualization Tools?

It enable organizations to access, manage, and integrate data from multiple sources without physically moving or duplicating it. These tools create a unified view of data, improving efficiency and flexibility in data management. By bridging different databases, they help businesses streamline operations and enhance decision-making. Here are some key benefits of using these tools:

- Seamless access: Connects multiple databases as one.

- Faster performance: Optimizes query execution.

- Strong security: Ensures data protection.

- High flexibility: Supports various environments.

Top 10 Database Virtualization Tools in 2025

Best tools in 2025 enable seamless data integration, real-time access, and enhanced analytics. Best solutions like Denodo and IBM Cloud Pak for Data drive efficiency and smarter decision-making.

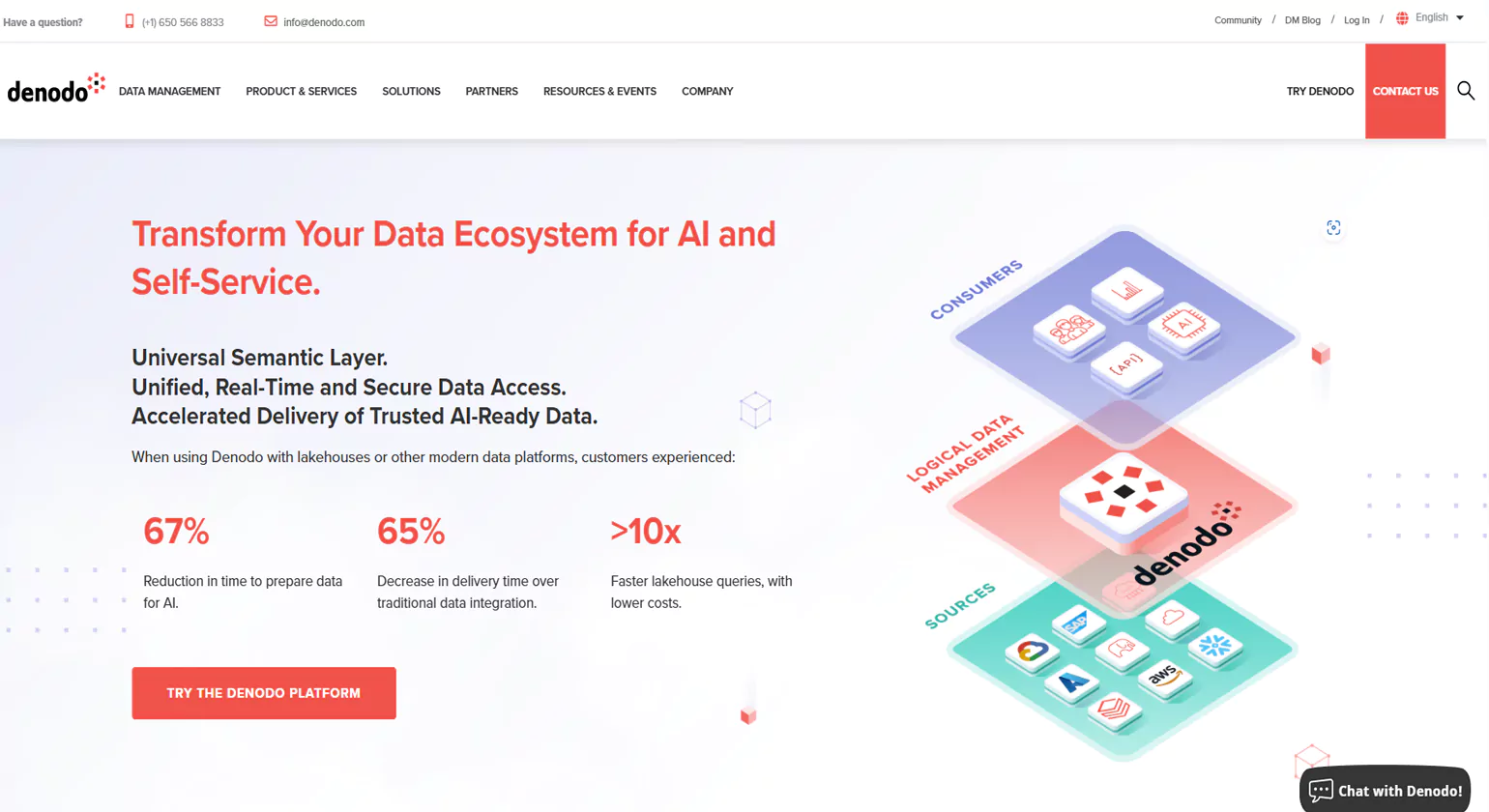

1. Denodo Platform

Denodo is a global leader in data management, offering one of the most advanced database virtualization tools in the market. The Denodo Platform provides a logical approach to data integration, management, and delivery, enabling businesses to achieve self-service BI, advanced analytics, and seamless hybrid/multi-cloud integration. With a proven track record of delivering over 400% ROI and rapid payback within six months, Denodo is a trusted choice for enterprises across 30+ industries.

Unique Selling Points (USPs)

- Logical Data Integration: Eliminates data silos without physically moving data.

- AI-Driven Performance: Features AI-powered query optimization and intelligent recommendations.

- Hybrid & Multi-Cloud Readiness: Seamless integration across cloud platforms, including AWS, Google Cloud, and Azure.

- Self-Service Data Access: Empowers business users with easy-to-access and governed data.

- Enterprise-Grade Security: Supports advanced security policies, including attribute-based access control.

Key Features

- Data Virtualization & Federation – Provides a unified view of data across disparate sources without replication.

- AI-Powered Data Catalog – Enables natural language queries and AI-driven insights for business users.

- Query Optimization & Acceleration – Uses dynamic query execution and MPP engines for high performance.

- Cloud & On-Premises Support – Compatible with all major cloud providers and on-premise databases.

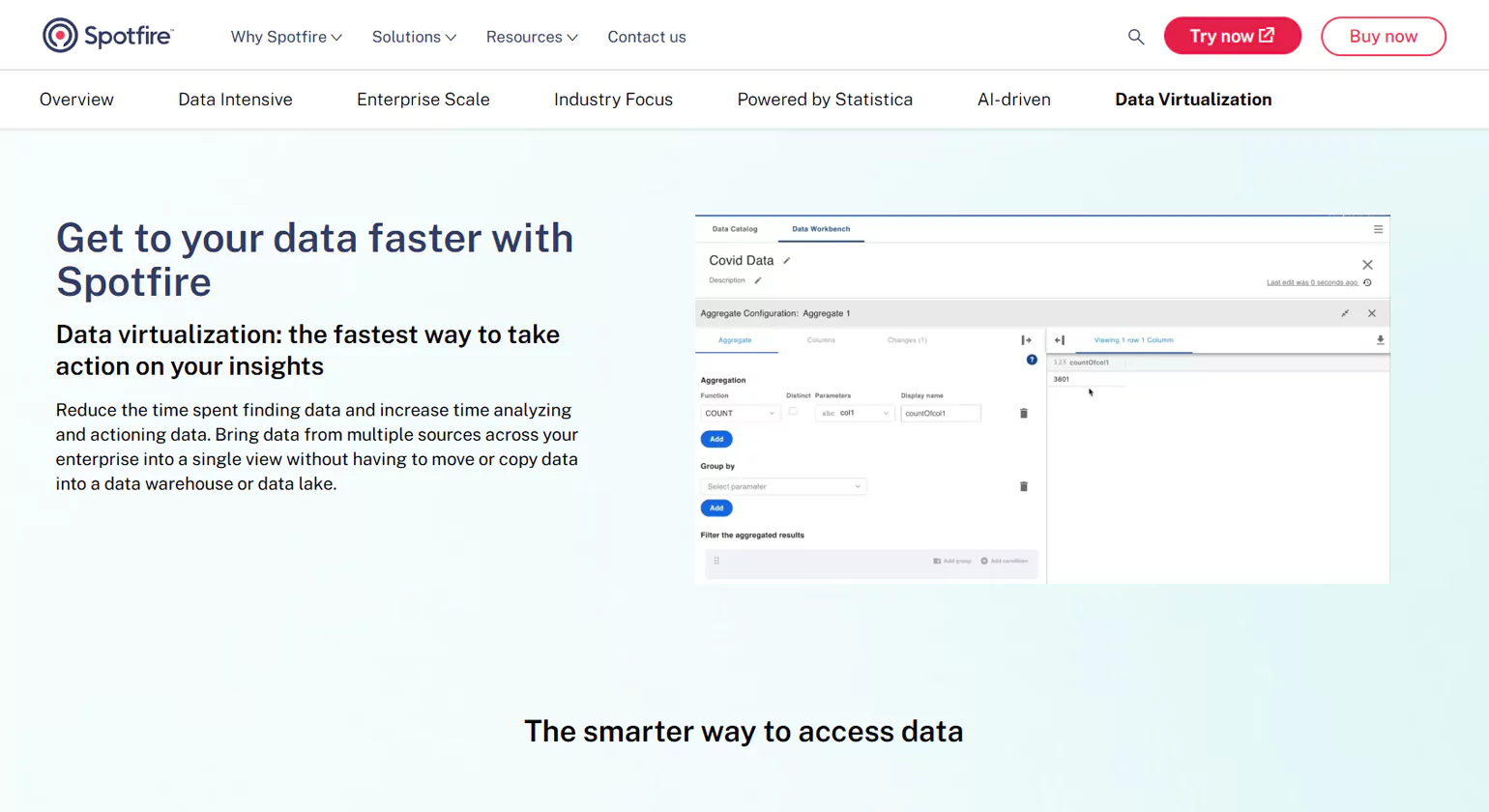

2.TIBCO Data Virtualization

TIBCO Data Virtualization is a powerful database virtualization tool designed to unify data from multiple sources without replication. By creating a virtualized data layer, it enables organizations to access, integrate, and analyze data in real time, significantly reducing complexity and improving agility.

Key Benefits

- Real-Time Data Access – Eliminates data silos and enables instant insights across diverse data sources.

- Seamless Integration – Connects to cloud, on-premises, and hybrid environments effortlessly.

- Enhanced Performance – Optimized query execution ensures high-speed data processing.

- Data Governance & Security – Provides role-based access, encryption, and compliance support.

- Agile & Scalable – Adapts to growing data needs with a flexible architecture.

Core Features

- Logical Data Layer – Unifies structured and unstructured data from various systems.

- Advanced Query Optimization – Improves analytics performance without data movement.

- Multi-Cloud & Hybrid Support – Works across AWS, Azure, Google Cloud, and private clouds.

- Self-Service Analytics – Empowers business users with easy access to enterprise data.

- Metadata Management – Enhances visibility and control over virtualized datasets.

3. IBM Cloud Pak for Data

IBM Cloud Pak for Data is a comprehensive data and AI platform that enables businesses to unify, manage, and analyse their data efficiently. Designed for enterprises seeking to integrate AI into their operations, this platform combines database virtualization tool with AI-powered analytics to drive automation, scalability, and cost optimization.

Unique Selling Points (USPs)

- AI-Powered Data Management: Leverages IBM’s watsonx to enhance data analytics, automation, and decision-making.

- End-to-End AI Integration: Supports AI-driven insights for customer service, marketing, HR, and IT operations.

- Hybrid Cloud & Multi-Cloud Flexibility: Enables data accessibility across public, private, and hybrid cloud environments.

- Industry-Specific AI Solutions: Tailored AI applications for financial services, telecommunications, healthcare, and more.

- Cost Optimization & Performance Boost: Helps organizations reduce cloud-related operating costs and optimize resource allocation.

Key Features

- Data Virtualization & Integration – Provides a unified data layer without physically moving data.

- AI-Powered Automation – Uses machine learning and AI models to streamline workflows and enhance efficiency.

- Hybrid & Multi-Cloud Compatibility – Ensures seamless data operations across on-premise and cloud environments.

- Watsonx AI Capabilities – AI models that drive business transformation through generative AI, automation, and analytics.

4. AtScale Virtual Data Warehouse

Founded in 2013 by former Yahoo data engineers, AtScale was created to solve the fundamental challenges of big data—scalability, query performance, and complex data pipelines. By leveraging a semantic layer, AtScale simplifies data access, accelerates insights, and enhances AI/ML capabilities. As a result, enterprises can maximize the value of their database virtualization tools while ensuring seamless business intelligence integration.

Unique Selling Points (USPs)

- Semantic Layer for Data Unification – Provides a consistent and governed data model across BI and AI applications.

- Self-Service Analytics – Empowers business users with intuitive data access, reducing reliance on IT teams.

- Optimized Query Performance – Uses intelligent query acceleration to deliver faster insights.

- AI/ML-Driven Decision Support – Enhances predictive analytics by streamlining data access for machine learning models.

- Enterprise-Scale Integration – Seamlessly works across cloud, hybrid, and on-premise environments.

Key Features

- Semantic Data Virtualization – Bridges the gap between raw data and business insights without data movement.

- Accelerated BI & AI Workflows – Enhances analytics efficiency through query optimization and intelligent caching.

- Self-Service Data Access – Enables non-technical users to explore and analyze data with ease.

- Multi-Cloud & Hybrid Compatibility – Supports leading cloud providers and on-premise databases.

- Governed Data Access & Security – Ensures compliance and governance with centralized data control.

5.CData Connect Cloud

CData is a leader in universal data connectivity, enabling businesses to integrate and interact with data from over 250 enterprise sources. With a focus on real-time access, CData’s solutions bridge the gap between SaaS, cloud, on-premise databases, and various analytics platforms. By leveraging database virtualization tools, organizations can eliminate data silos and ensure seamless data flow across applications.

Key Benefits

- Universal Data Access – Connect BI, analytics, ETL, and reporting tools to live data without complex integration.

- Seamless Application Connectivity – Standardized SQL-92 interface supports any data source with minimal setup.

- Enhanced Query Performance – Optimized drivers and adapters ensure real-time data access and efficient query execution.

- Secure Data Management – Centralized access control simplifies governance and ensures compliance.

- Multi-Cloud & Hybrid Support – Works with modern and legacy applications across cloud and on-premise environments.

Core Features

- Extensive Connector Library – Supports SaaS, NoSQL, RDBMS, and Big Data sources.

- Optimized Data Drivers – Includes ODBC, JDBC, ADO.NET, and Python drivers for seamless integration.

- Enterprise-Grade Security – Provides a secure, managed data access layer for all applications.

- Self-Service Data Access – Enables business users, developers, and analysts to connect with data effortlessly.

- Scalable & Flexible Deployment – Deploy on-premise or in the cloud for maximum adaptability.

6. Data Virtuality Platform

Data Virtuality Platform is an advanced database virtualization tool that enables businesses to seamlessly integrate, manage, and analyze data from various sources without physical replication. By leveraging logical data warehousing and real-time data access, Data Virtuality empowers organizations to accelerate decision-making and enhance analytics capabilities.

Key Benefits

- Real-Time Data Integration – Connect and query data from multiple sources instantly without moving data.

- Automated Data Pipelines – Simplify ETL processes with automation and data modeling capabilities.

- Flexible Deployment – Supports on-premise, cloud, and hybrid environments for maximum adaptability.

- Performance Optimization – Smart caching and query optimization ensure fast and efficient data access.

- Secure & Scalable – Enterprise-grade security features protect sensitive data while enabling scalability.

Core Features

- Logical Data Warehouse – Combine multiple data sources into a single virtual layer.

- SQL-Based Querying – Use standard SQL to access and manipulate data seamlessly.

- Self-Service BI & Analytics – Empower business users with easy-to-use tools for data exploration.

- Comprehensive Connectivity – Supports databases, SaaS, APIs, and big data platforms.

- Data Governance & Compliance – Advanced access control ensures secure data management.

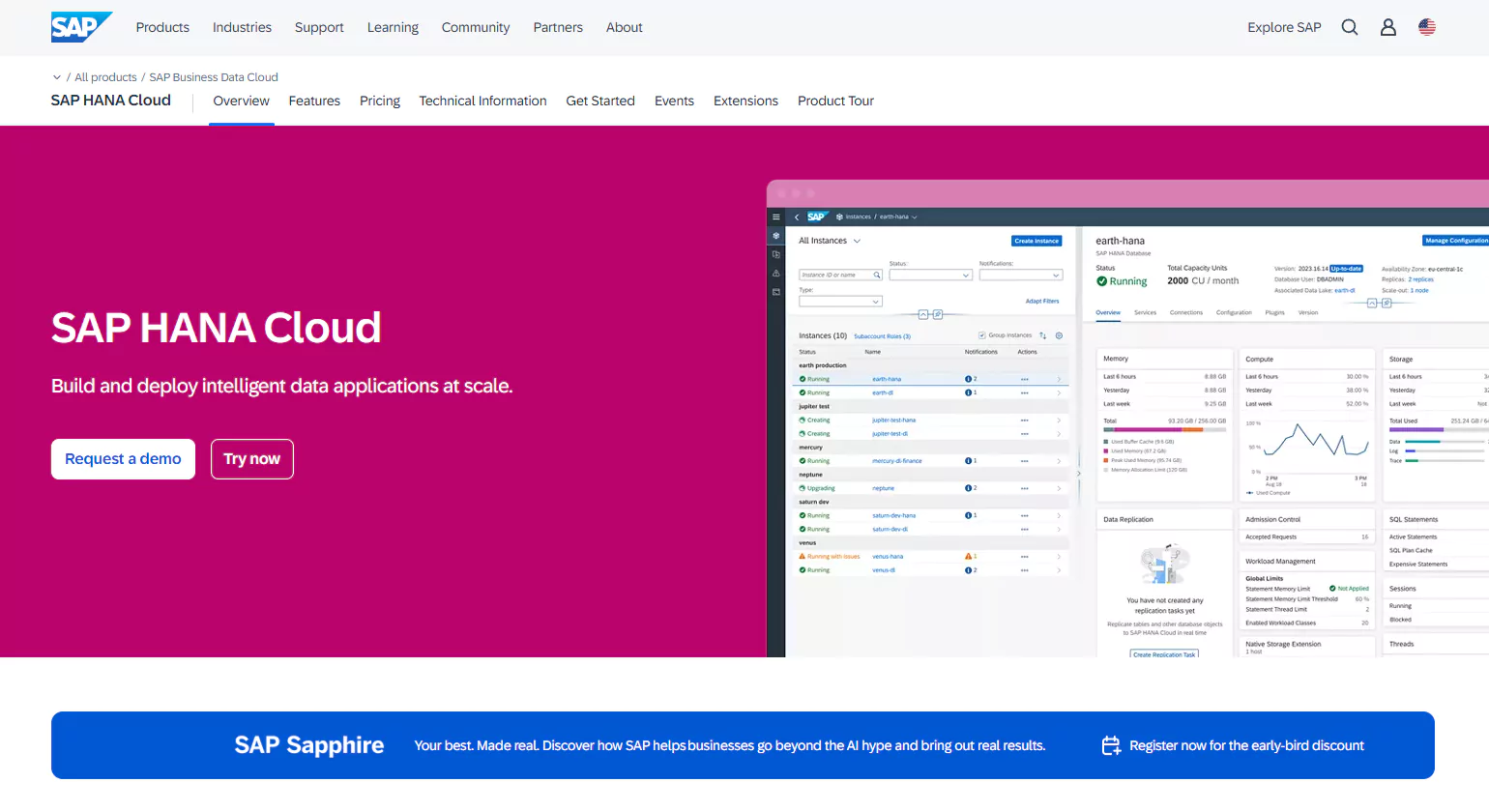

7.SAP HANA Cloud

SAP HANA Cloud is a modern database virtualization tools that provides businesses with a unified platform for managing, integrating, and analyzing data in real time. As a fully managed cloud database, SAP HANA Cloud delivers high-performance data processing, enabling enterprises to drive smarter decision-making and optimize operations.

Key Benefits

- Real-Time Data Processing – Analyze and process massive datasets instantly.

- Flexible & Scalable – Scale resources dynamically to meet changing business needs.

- Unified Data Access – Seamlessly connect on-premise and cloud-based data sources.

- Advanced Analytics & AI – Leverage built-in machine learning and predictive analytics.

- Enterprise-Grade Security – Ensure compliance with robust encryption and data governance.

Core Features

- Multi-Model Database – Supports relational, document, graph, and spatial data models.

- Smart Data Integration – Access and virtualize data from multiple sources without duplication.

- High-Speed In-Memory Computing – Process complex queries with low latency.

- Cloud-Native Deployment – Fully managed service for simplified IT operations.

- Self-Service BI & Reporting – Empower users with intuitive data exploration tools.

8. Red Hat JBoss Data Virtualization

Red Hat JBoss Data Virtualization is an advanced database virtualization tool designed to unify data from multiple sources without requiring physical replication. It enables enterprises to create a real-time, virtualized data layer that simplifies access to disparate databases, cloud services, and applications.

Key Benefits

- Unified Data Access – Combine structured and unstructured data from diverse sources.

- Accelerated Insights – Deliver real-time analytics without moving or duplicating data.

- Enhanced Agility – Quickly adapt to evolving business needs with a flexible data architecture.

- Enterprise-Grade Security – Maintain compliance with robust access controls and encryption.

- Seamless Cloud & On-Prem Integration – Connect hybrid environments effortlessly.

Core Features

- Data Federation – Aggregate and query data from multiple sources in real time.

- High-Performance Query Optimization – Boost efficiency with intelligent query processing.

- Scalable & Open Architecture – Integrate with a variety of databases, cloud platforms, and applications.

- Self-Service Data Access – Empower users with an intuitive interface for querying virtualized data.

- Support for Modern Workloads – Enable AI, ML, and advanced analytics with streamlined data access.

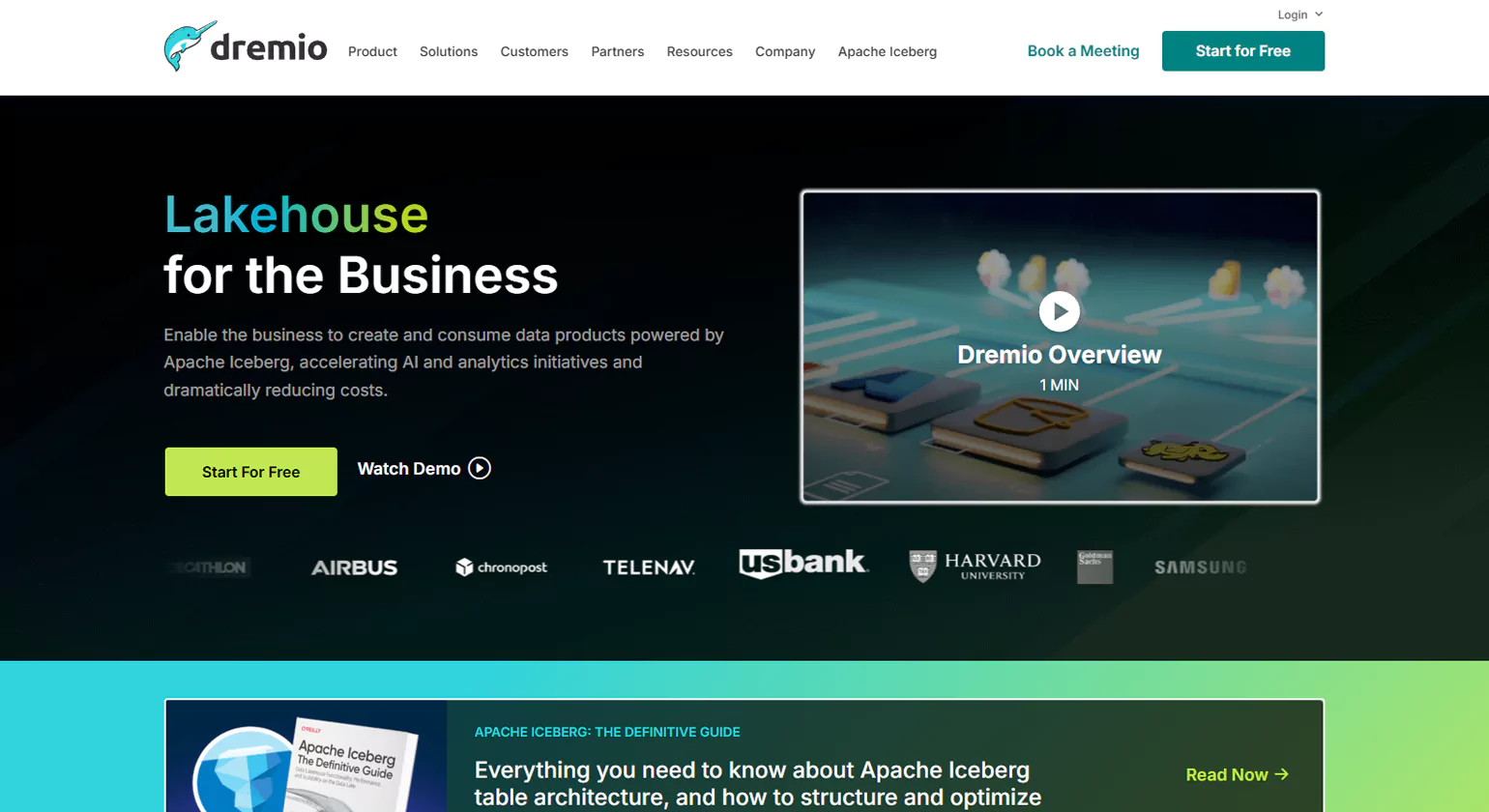

9. Dremio

Dremio is a cutting-edge database virtualization tools that enables organizations to query, analyze, and explore data directly from their data lakes—without the need for complex ETL processes. With a focus on speed, scalability, and cost efficiency, Dremio empowers businesses to make data-driven decisions faster than ever.

Key Benefits

- SQL Query Acceleration – Optimize query performance with advanced caching and indexing.

- No ETL Required – Eliminate time-consuming data movement and replication.

- Seamless Integration – Connects with cloud storage, on-premise databases, and BI tools.

- Self-Service Analytics – Empower business users with easy-to-use data exploration.

- Cost-Effective Scaling – Reduce infrastructure costs with a modern, cloud-native architecture.

Core Features

- Data Virtualization – Query data across multiple sources in real-time without moving it.

- Apache Iceberg & Arrow Optimization – Leverage open-source technologies for high-speed analytics.

- Lakehouse Architecture – Combine the flexibility of a data lake with the performance of a data warehouse.

- Security & Governance – Built-in access controls and data lineage tracking ensure compliance.

- Multi-Cloud & Hybrid Support – Deploy on AWS, Azure, Google Cloud, or on-premises.

10. Informatica PowerCenter

Informatica PowerCenter is a leading database virtualization tool that provides powerful data integration and management capabilities for enterprises. Designed for scalability, flexibility, and high performance, PowerCenter enables organizations to streamline their data workflows, ensuring seamless access, transformation, and delivery of critical business information.

Key Benefits

- Enterprise-Scale Data Integration – Connects and integrates data from diverse sources, both on-premises and in the cloud.

- Robust ETL Processing – Extract, transform, and load data efficiently for business intelligence and analytics.

- Data Governance & Compliance – Ensures high-quality data with built-in governance and security features.

- High Performance & Scalability – Supports massive data volumes and complex integration scenarios.

- Automation & Workflow Management – Simplifies data orchestration with automation and AI-driven optimizations.

Core Features

- Advanced Data Virtualization – Access and integrate data in real time without physical movement.

- Comprehensive Connectivity – Works with databases, cloud applications, big data platforms, and more.

- Metadata-Driven Architecture – Enhances visibility and control over data processes.

- AI-Powered Automation – Leverages Informatica’s CLAIRE AI for intelligent data processing.

- Secure & Compliant – Ensures data security, privacy, and regulatory compliance.

Key Components of Database Virtualization Tools

By integrating these key components, these tools enhance data accessibility, security, and performance. They enable businesses to manage complex data environments without physical movement or duplication. With seamless integration and optimization, organizations can make data-driven decisions more efficiently. As a result, these solutions play a crucial role in modernizing data management strategies.

1. Virtual Database Layer

The virtual database layer acts as an abstraction between users and underlying databases, allowing seamless access to data from multiple sources. It eliminates the need for data replication, reducing storage costs and complexity. By providing a unified view, it simplifies data management and enhances efficiency.

- Unified data view: Combines multiple databases into one interface.

- No data duplication: Reduces storage and maintenance costs.

- Simplified management: Streamlines access to distributed data.

2. Data Access and Integration Layer

This layer ensures smooth communication between various data sources and applications by enabling real-time data integration. It supports different database formats, making it easier to query and analyze data. With improved connectivity, businesses can make faster and more informed decisions.

- Real-time integration: Connects and updates data instantly.

- Multi-format support: Works with SQL, NoSQL, and cloud databases.

- Enhanced connectivity: Bridges diverse systems for seamless access.

3. Security and Access Control

Robust security measures protect sensitive data from unauthorized access, ensuring compliance with regulations. This component includes encryption, authentication, and role-based permissions to safeguard information. By enforcing strict controls, it minimizes security risks and data breaches.

- Access control: Restricts data based on user roles.

- Data encryption: Protects sensitive information from threats.

- Regulatory compliance: Ensures adherence to industry standards.

4. Performance Optimization Mechanisms

Performance optimization mechanisms enhance query execution speed and overall system efficiency. These include caching, indexing, and workload balancing to minimize latency. By reducing bottlenecks, businesses can improve responsiveness and data availability.

- Faster queries: Uses indexing and caching for quick retrieval.

- Load balancing: Distributes workload efficiently.

- Reduced latency: Optimizes system performance for smooth operations.

Demystifying Data Virtualization: How it Works and Why It’s Important for Enterprises

Data virtualization allows enterprises to access and manage data from multiple sources without physically moving it. By creating a unified virtual layer, businesses can simplify data integration, enable real-time analytics, and improve decision-making. It reduces complexity, accelerates digital transformation, and ensures secure, efficient access to enterprise-wide information.

Understanding the Architecture of Data Virtualization

Data virtualization relies on a layered architecture that separates physical data storage from user access. The virtual layer connects diverse sources—databases, cloud storage, and applications—while providing a unified interface. This architecture enables consistent data representation, central governance, and seamless integration without disrupting underlying systems.

Key Components of Data Virtualization Architecture

- Virtual Data Layer: Abstracts data from physical sources

- Data Connectors: Link multiple structured and unstructured sources

- Query Engine: Processes user requests across sources

- Security and Governance Layer: Ensures data compliance and access control

How Data Virtualization Integrates Multiple Data Sources

Data virtualization integrates structured, semi-structured, and unstructured data from multiple systems in real time. It eliminates the need for ETL or physical replication, reducing latency and storage costs. Users access a consolidated view through a single interface, enabling cross-functional insights without complex data movement.

Real-Time Data Access and Query Processing Explained

Data virtualization enables real-time query processing by fetching only the necessary data from connected sources on demand. Advanced query optimization and caching techniques ensure fast performance, while APIs and connectors allow integration with BI tools, analytics platforms, and applications for timely insights and reporting.

Enhancing Enterprise Decision-Making with Data Virtualization

By providing a unified, accurate, and up-to-date view of enterprise data, virtualization empowers better strategic decisions. Teams can analyze trends, detect anomalies, and generate reports quickly. This agility improves responsiveness, supports predictive analytics, and drives data-driven strategies across departments.

Cost, Efficiency, and Strategic Advantages for Businesses

Data virtualization reduces storage costs, simplifies IT management, and eliminates redundant data replication. It enhances operational efficiency, accelerates analytics deployment, and ensures compliance through centralized governance. Enterprises benefit from faster ROI, improved scalability, and the ability to adapt quickly to market changes while leveraging their existing infrastructure.

Advantages of Using Database Virtualization Tools

1. Improved Scalability and Performance

- Seamless scalability expands data operations without extra hardware.

- Optimized performance speeds up queries and reduces latency.

- Efficient resource usage lowers storage and computing demands.

- Handles large datasets without compromising speed.

2. Faster Development and Testing

- Quick setup eliminates the need for physical test environments.

- Real-time access uses live data for accurate testing.

- Faster deployments speed up application releases.

- Reduces delays in software development cycles.

3. Cost-Effective Data Management

- Lower storage costs reduce redundant data copies.

- Minimal infrastructure expenses avoid costly hardware upgrades.

- Optimized resource allocation maximizes IT budget efficiency.

- Reduces maintenance costs by eliminating data duplication.

4. Enhanced Security and Compliance

- Strict access control restricts data based on user roles.

- Data encryption protects sensitive information.

- Regulatory compliance ensures adherence to industry standards.

- Prevents unauthorized access while maintaining data integrity.

5. Simplified Data Access and Integration

- Unified data view combines multiple sources into one interface.

- Cross-platform integration supports cloud and on-premises systems.

- Easy accessibility enables real-time data retrieval.

- Reduces operational complexity for seamless data usage.

Limitations of Database Virtualization Tools

Despite its advantages, data virtualization comes with certain challenges that organizations must consider. Performance overhead, implementation complexity, security risks, and infrastructure dependencies can impact efficiency. Without proper optimization, queries may slow down, and integration issues may arise. Understanding these limitations helps businesses plan better and mitigate potential risks.

1. Potential Performance Overhead

While improves data access, it may introduce performance overhead due to additional query processing. The virtualization layer requires extra computing resources, which can slow down response times. As a result, high-volume workloads may experience latency issues.

- Extra processing can increase system load

- High-volume queries may lead to slower performance

- Resource-intensive operations require optimization

2. Complexity in Implementation

Setting up data virtualization can be complex, especially for organizations with diverse data environments. Integrating multiple databases, configuring security policies, and ensuring smooth data flow require expertise. Without proper planning, implementation challenges can arise.

- Requires expertise in database integration

- Complex configurations may lead to compatibility issues

- Proper planning is essential for smooth deployment

3. Security and Compliance Challenges

Although virtualization tools enhance security, they also introduce risks related to data privacy and compliance. Organizations must enforce strict access controls and encryption to protect sensitive data. Failure to meet compliance standards can result in security breaches.

- Data privacy risks require strong security measures

- Compliance with industry regulations can be challenging

- Unauthorized access must be prevented with strict controls

4. Dependence on Infrastructure and Compatibility

The effectiveness of data virtualization depends on existing infrastructure and system compatibility. Some legacy databases may not fully support virtualization, leading to integration challenges. Additionally, network bandwidth and storage limitations can impact performance.

- Legacy systems may face compatibility issues

- Network bandwidth can affect data retrieval speed

- Infrastructure limitations may require upgrades

Is Data Virtualization the New Database?

Data virtualization is transforming how enterprises access and use data, but it isn’t a replacement for traditional databases. Instead, it provides a virtual layer that integrates multiple data sources in real time. By complementing existing databases, it enables faster insights, reduces data duplication, and simplifies analytics, making it a strategic tool for modern data-driven organizations.

Understanding the Difference Between Data Virtualization and Traditional Databases

Traditional databases store structured data physically in tables, requiring ETL for integration and analysis. Data virtualization, on the other hand, creates a virtual layer that provides access to data from multiple sources without moving it. This approach focuses on real-time access, integration flexibility, and minimizing storage overhead.

How Data Virtualization Complements Existing Database Systems

Data virtualization works alongside traditional databases by aggregating data from multiple systems—cloud, on-premise, or hybrid—into a unified view. It reduces the need for extensive ETL processes, improves reporting speed, and enables seamless integration with BI and analytics tools, enhancing the value of existing database investments.

Key Advantages of Data Virtualization Over Conventional Databases

Data virtualization provides several benefits over traditional databases, including real-time data access, faster analytics, reduced storage costs, simplified data integration, and improved scalability. Enterprises can unify structured and unstructured data sources, supporting better decision-making without duplicating or physically moving large datasets.

Real-Time Data Access vs. Stored Data in Databases

Unlike conventional databases that require batch processing and data replication, data virtualization delivers real-time access to live data across multiple systems. This enables instant insights, more responsive analytics, and quicker operational decisions while maintaining data accuracy and consistency across the enterprise.

Use Cases Where Data Virtualization Outperforms Traditional Databases

Data virtualization excels in scenarios like:

High-Impact Use Cases

- Enterprise reporting and dashboards requiring multi-source data

- Real-time analytics and predictive modeling

- Cloud and hybrid data integration

- Mergers and acquisitions with diverse IT systems

- Reducing ETL and data warehousing dependency

Challenges and Limitations Compared to Standard Database Solutions

While powerful, data virtualization has limitations. Performance may lag for extremely large datasets, complex transformations, or poorly optimized queries. Security and compliance require robust governance, and some legacy applications may not integrate seamlessly. Understanding these constraints is crucial for effective implementation.

Should Enterprises Replace Databases with Data Virtualization?

Data virtualization is not a full replacement for databases but a complementary solution. Enterprises benefit most when virtualization enhances data access, analytics, and integration without disrupting existing database infrastructure. A hybrid approach maximizes flexibility, efficiency, and ROI, leveraging both virtualization and traditional database strengths.

How to Choose the Right Database Virtualization Tool for Your Business

Selecting the right solution requires careful evaluation of business needs, technical compatibility, and long-term goals. Since different tools offer varying features, it is essential to assess key factors before deciding. Additionally, a well-planned implementation strategy ensures seamless integration and optimal performance.

Factors to Consider

Before choosing a tool, businesses must evaluate its capabilities, scalability, and security features. A well-suited solution should align with the organization’s data infrastructure and operational requirements.

- Assess scalability to ensure future growth and data expansion

- Verify compatibility with existing databases and cloud platforms

- Evaluate security features, including encryption and access controls

- Consider ease of integration with business applications

Implementation Strategies

A successful implementation requires a step-by-step approach to avoid disruptions. Proper planning, testing, and user training can streamline the transition and maximize efficiency.

- Conduct a thorough assessment of current data infrastructure

- Develop a phased deployment plan to minimize downtime

- Test the tool in a controlled environment before full integration

- Train employees to optimize adoption and usage

Conclusion

Database virtualization tools offer a powerful solution for managing and integrating data across multiple platforms. By enhancing scalability, security, and accessibility, they help businesses streamline operations and reduce costs. However, selecting the right tool requires careful evaluation of features, compatibility, and implementation strategies. While these tools come with challenges, proper planning and optimization can maximize their benefits. Ultimately, investing in the right solution enables organizations to improve data efficiency and decision-making.

Read More >>> Database Development Services for Strong Data Security

FAQ

1. What is database virtualization and how does it work?

Database virtualization creates a unified data layer that lets users access information without needing to know where it’s stored. It works by abstracting physical databases and presenting them as a single virtual source. This improves data accessibility, reduces storage duplication, speeds up deployment, and simplifies complex database environments.

2. What are data virtualization solutions?

Data virtualization solutions allow organizations to integrate data from multiple sources in real time without moving or replicating it. These platforms provide a single access point for analytics, reporting, and applications. They help improve data agility, reduce ETL workloads, and support faster decision-making by delivering up-to-date information instantly.

3. What is data virtualisatie and why is it used?

Data virtualisatie (Dutch for data virtualization) is used to combine data from various systems into one virtual layer for easier access. It eliminates physical duplication and enables real-time insights. Companies use it to simplify data integration, cut storage costs, boost analytics performance, and accelerate digital transformation initiatives.

4. What is data virtualization software and how does it benefit businesses?

Data virtualization software connects multiple data sources and presents them as one unified view for users and applications. It benefits businesses by enabling faster data delivery, reducing integration complexity, improving analytics, and eliminating costly data replication. This leads to better decision-making, lower operational overhead, and improved data governance.

5.What are the key benefits of database virtualization tools?

Database virtualization tools offer benefits such as simplified data access, real-time integration, enhanced security, reduced storage costs, and faster analytics. They allow multiple data sources to be unified virtually, improve decision-making, minimize duplication, and provide flexibility for reporting and development without altering the underlying physical databases.